Machine learning teams build training pipelines, model serving infrastructure, and MLOps automation where experiment tracking, model versioning, and deployment orchestration define production readiness.

Your team trains models, manages feature stores, and monitors inference quality while model drift degrades predictions over time. GPU resource contention delays experiments, model reproducibility requires careful version control, and A/B testing coordinates with product teams.

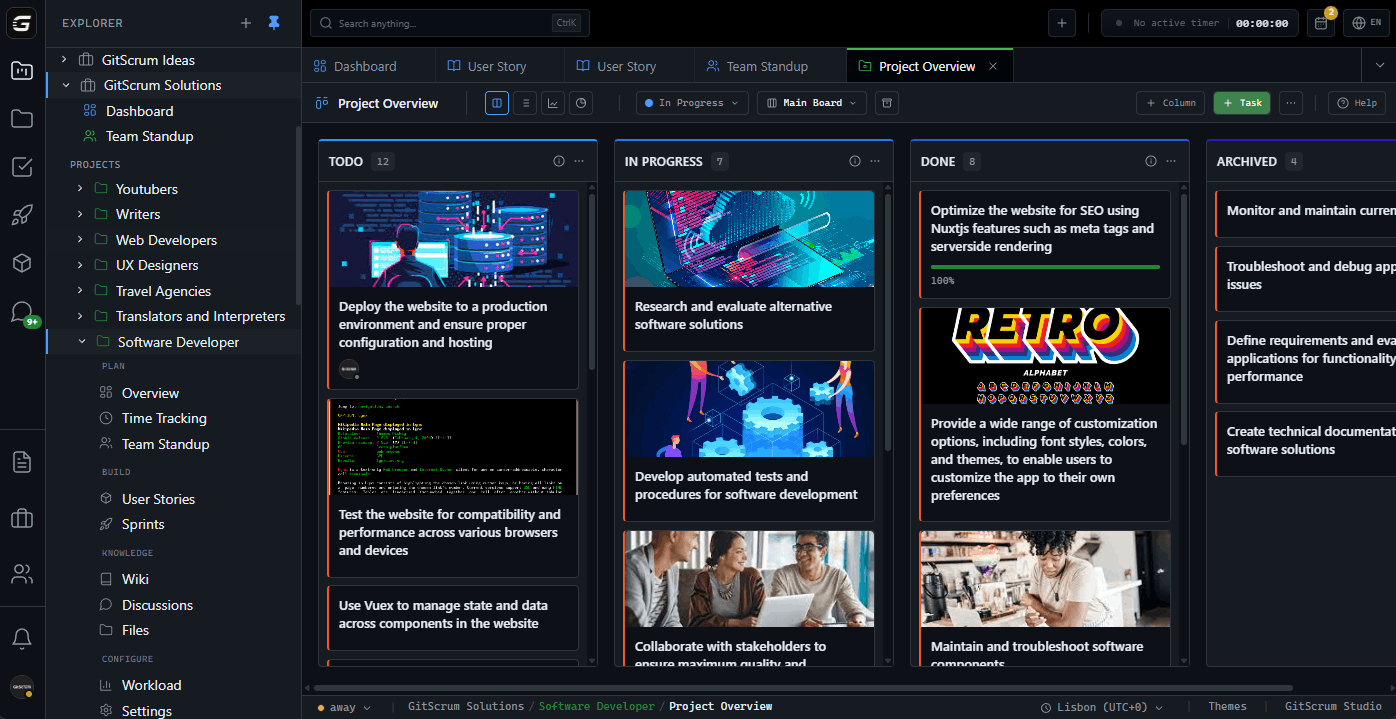

Sprint planning balances research experiments with infrastructure work, Wiki documents model architectures and training configurations, and Git integrations track code alongside model artifacts. Discussions coordinate with data engineering on feature availability.

GitScrum helps ML teams: boards separate training from inference work, user stories capture ML requirements with performance metrics, and workload manages GPU resource allocation across experiments.

The GitScrum Advantage

One unified platform to eliminate context switching and recover productive hours.