The Productivity Measurement Problem Bad metrics: ├─ Lines of code │ └─ More lines = more bugs, not more value ├─ Commits per day │ └─ Many small commits = gaming the metric ├─ GitHub green squares │ └─ Activity theater, not output ├─ Hours online │ └─ Presence !

Goodhart's Law The law: 'When a measure becomes a target, it ceases to be a good measure.' Examples: ├─ Track lines of code │ └─ Devs write verbose code ├─ Track commits │ └─ Devs make tiny commits ├─ Track tickets closed │ └─ Devs cherry-pick easy tickets ├─ Track hours │ └─ Devs stay online but unfocused Measure outcomes. Not activity.

Flow Metrics Healthy indicators: ├─ Cycle Time │ └─ Time from start to done │ └─ How fast work flows │ └─ Lower = better ├─ Flow Efficiency │ └─ Active time / total time │ └─ How much time actually working │ └─ Higher = better ├─ Work In Progress │ └─ Items currently being worked │ └─ Too high = context switching │ └─ Lower = focused ├─ Throughput │ └─ Items completed per time period │ └─ Consistent = healthy These can't be gamed. They reflect real flow.

Cycle Time What it measures: ├─ Start: Developer picks up task ├─ End: Task deployed to production ├─ Time: 3 days ├─ Cycle time: 3 days What affects it: ├─ Task size (smaller = faster) ├─ Review wait time ├─ Deployment frequency ├─ Blockers Tracking shows: ├─ Average cycle time: 4 days ├─ Tasks > 1 week: 15% ├─ Blockers add: 1.5 days average ├─ Review wait: 8 hours average Improve cycle time. Not individual activity.

Flow Efficiency Real eye-opener: ├─ Task total time: 5 days (40 hours) ├─ Time actually worked: 12 hours ├─ Flow efficiency: 12/40 = 30% Where did 70% go? ├─ Waiting for review: 8 hours ├─ Waiting for clarification: 6 hours ├─ Blocked by other team: 4 hours ├─ Context switching: 6 hours ├─ Meetings: 4 hours Developers aren't slow.

The system is slow. Context Switching Cost Research shows: ├─ 1 project: 100% productive time ├─ 2 projects: 80% (20% lost switching) ├─ 3 projects: 55% (45% lost) ├─ 4 projects: 35% (65% lost) ├─ 5 projects: 15% (85% lost) WIP limits help: ├─ Max 2 tasks in progress ├─ Finish before starting new ├─ Focus produces better work ├─ Less context switching Multitasking is a lie.

Blocker Visibility What blocks developers: ├─ Waiting for: │ ├─ Code review: 23 hours avg │ ├─ Design decisions: 18 hours avg │ ├─ Access requests: 12 hours avg │ ├─ Other team: 48 hours avg │ └─ Clarification: 8 hours avg Make blockers visible: ├─ Task tagged as 'Blocked' ├─ Reason documented ├─ Time blocked tracked ├─ Patterns surface Fix blockers. Not developer 'productivity'.

Team Health Metrics What matters: ├─ Sprint completion rate │ └─ Are we delivering commitments? ├─ Bug escape rate │ └─ Quality of output ├─ Deployment frequency │ └─ How often we ship ├─ Change failure rate │ └─ How often deploys cause problems ├─ Mean time to recovery │ └─ How fast we fix issues DORA metrics.

Research-backed. Individual vs.

Team Metrics Dangerous: ├─ Individual velocity ├─ Individual commit count ├─ Individual ticket count ├─ Individual lines of code These create: ├─ Competition instead of collaboration ├─ Cherry-picking easy work ├─ Avoiding helping teammates ├─ Gaming the system Healthy: ├─ Team velocity ├─ Team cycle time ├─ Team deployment frequency ├─ Team quality metrics Team wins together. Or fails together.

Developer Experience Productivity through experience: ├─ Local dev speed │ └─ How fast to set up? │ └─ How fast to build?

├─ CI/CD speed │ └─ How long for feedback? │ └─ Are tests flaky?

├─ Documentation quality │ └─ Can devs find answers? │ └─ Is it accurate?

├─ Onboarding time │ └─ How fast to first commit? │ └─ How fast to productive?

Invest in developer experience. Productivity follows.

Flow State Protection Deep work requires: ├─ Uninterrupted blocks of 2+ hours ├─ Clear next task ├─ Minimal context switching ├─ Async communication default Organizational changes: ├─ No-meeting blocks │ └─ Tuesdays/Thursdays morning ├─ Async-first communication │ └─ Slack for async, not instant ├─ Batch interruptions │ └─ Office hours for questions ├─ Respect focus time │ └─ Don't mention unless urgent Protect focus. Productivity follows.

Survey-Based Insights Ask developers: ├─ How often do you get 2+ hours uninterrupted? ├─ What blocks you most often?

├─ What would make you more productive? ├─ How would you rate your tooling?

├─ Do you feel trusted to manage your time? Regular pulse surveys: ├─ Monthly or quarterly ├─ Anonymous ├─ Action on results ├─ Track improvements Ask the people doing the work.

Productivity Anti-Patterns Don't do these: ├─ Keystroke logging │ └─ Destroys trust ├─ Screenshot monitoring │ └─ Surveillance, not management ├─ Online time tracking │ └─ Presence != output ├─ Daily status reports │ └─ Overhead, not insight ├─ Stack ranking developers │ └─ Destroys teamwork These signal distrust. Distrust destroys productivity.

Outcomes Over Output Measure: ├─ Customer impact │ └─ Did users benefit? ├─ Business outcomes │ └─ Did metrics move?

├─ System health │ └─ Is code quality improving? ├─ Team capability │ └─ Are we learning and growing?

Not: ├─ Lines of code ├─ Hours worked ├─ Commits made ├─ Tickets closed Impact matters. Activity doesn't.

Leadership Role Managers should: ├─ Remove blockers │ └─ Clear the path for work ├─ Protect focus time │ └─ Shield from interruptions ├─ Provide clarity │ └─ Clear goals, clear priorities ├─ Trust the team │ └─ Judge by results, not presence ├─ Enable, not monitor │ └─ Servant leadership Trust developers. They'll perform better.

Continuous Improvement Use data for improvement: ├─ Review cycle time monthly │ └─ What's slowing us down? ├─ Discuss in retros │ └─ What blockers keep appearing?

├─ Experiment │ └─ Try solutions, measure results ├─ Share learnings │ └─ Team learns together Data for insight. Not surveillance.

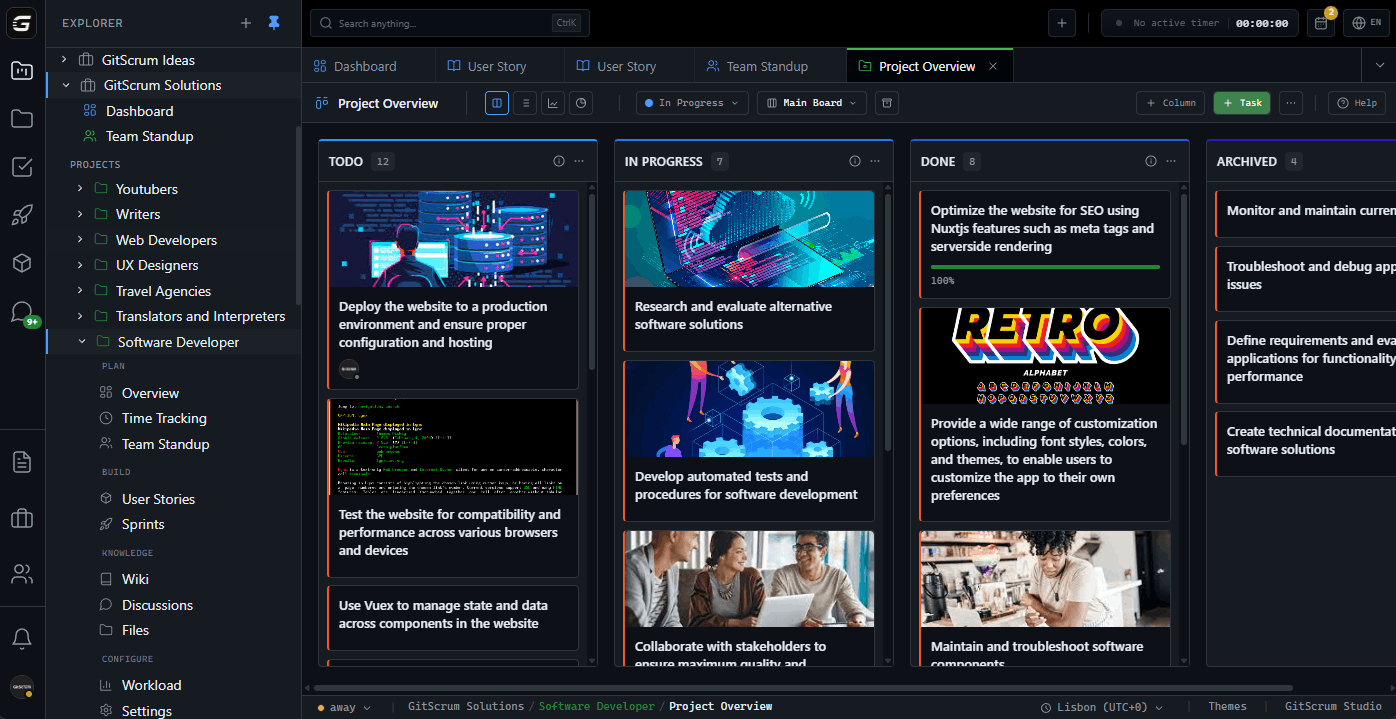

Getting Started 1. Sign up GitScrum ($8.90/user, 2 free) 2.

Stop measuring lines/commits/hours 3. Focus on cycle time and throughput 4.

Make blockers visible 5. Track team metrics, not individual 6.

Survey developers regularly 7. Improve the system, trust the people Measure flow.

Trust developers. Fix systems.

The GitScrum Advantage

One unified platform to eliminate context switching and recover productive hours.