The Gut Feel Problem Status meeting: - PM: 'How's the project going?

maybe... I feel like yes?' Reality: - No objective data - Burndown shows behind (but feels wrong) - Story points don't reflect actual work - Velocity fluctuates wildly - Estimates are guesses Decisions based on feeling.

Not data. The Wrong Metrics What gets measured: - Lines of code written - Hours logged - Commits per day - PRs per week - Keystrokes per hour What these measure: - Activity, not output - Presence, not progress - Busy-ness, not value What happens: - Gaming the metrics - Developers feel surveilled - Trust erodes - Quality suffers - Best people leave Goodhart's Law: When a measure becomes a target, it ceases to be a good measure.

The Right Metrics Flow metrics: 1. Cycle Time - Start to done - How long work takes - Predictability indicator 2.

Throughput - Items completed per period - Team capacity indicator - Not points, actual items 3. Work in Progress (WIP) - Active items right now - Context switching indicator - Lower = faster flow 4.

Lead Time - Request to delivery - Customer perspective - End-to-end efficiency 5. Flow Efficiency - Active time / Total time - Reveals wait states - Usually shocking (10-15%) Team metrics, not individual surveillance.

Cycle Time Analytics What it shows: - Average cycle time: 4.2 days - P85 cycle time: 6.5 days - Trend: Improving (was 5.1 days) Breakdown: - In Progress: 1.5 days - In Review: 2.1 days - In Testing: 0.6 days Insight: - Review is bottleneck - Action: Add reviewers or pair reviewing - Not: 'Developer X is slow' Process insight, not blame. Throughput Tracking Weekly throughput: - Week 1: 12 items - Week 2: 14 items - Week 3: 11 items - Week 4: 13 items - Average: 12.5 items/week Forecasting: - Backlog: 50 items - At current rate: 4 weeks - Confidence: 85% Projection based on actual data.

Not wishful estimates. WIP Limits Current WIP: - Team capacity: 6 parallel items - Current WIP: 12 items - Status: Overloaded Problem: - 12 things started, none finished - Context switching overhead - Everything takes longer - Nothing ships Action: - Stop starting, start finishing - WIP limit: 6 items - New work waits until slot opens - Faster flow through completion Blocker Analysis Blockers this month: - Waiting for approval: 8 days total - Waiting for dependencies: 12 days total - Waiting for review: 15 days total - Unclear requirements: 6 days total Biggest bottleneck: Code review Action: - Review SLA: 24 hours max - Smaller PRs: <100 lines - Pair programming option Data reveals bottleneck.

Not guessing. Sprint Analytics Sprint 15 Summary: - Committed: 34 points - Completed: 28 points - Completion rate: 82% Trend: - Sprint 12: 75% - Sprint 13: 78% - Sprint 14: 80% - Sprint 15: 82% Improving consistently.

Not perfect, but trending right. Velocity Reality Check Velocity myth: - 'Our velocity is 34 points' - 'We should commit to 34 points' - Sprint ends: 28 completed - 'Team underperformed' Velocity reality: - Average: 31 points - Range: 24-38 points - Standard deviation: 4.2 Commitment should use: - P50: 31 points (50% confidence) - P85: 27 points (85% confidence) Realistic planning.

Not optimistic fantasy. Burnup vs Burndown Burndown problem: - Shows 'behind schedule' - Doesn't show scope changes - Panic-inducing - Misleading Burnup advantage: - Shows work completed (going up) - Shows scope changes (top line moving) - Reveals: 'We did the work, scope grew' - Honest picture Scope crept 20%?

Burnup shows it. Burndown hides it.

Cumulative Flow Diagram Visual of flow: - Backlog band: Work waiting - In Progress band: Work active - Done band: Work completed Healthy pattern: - Bands consistent width - Steady flow through stages - No bulges Unhealthy pattern: - In Progress bulge: WIP too high - Review bulge: Bottleneck - Testing bulge: QA overloaded One chart shows everything. Predictability Can you predict delivery?

Low predictability: - Cycle time: 2-20 days (range: 18) - Forecast: 'Sometime between 2 weeks and 3 months' - Useless for planning High predictability: - Cycle time: 3-6 days (range: 3) - Forecast: 'Next week, 85% confidence' - Reliable for commitments Improve predictability: - Reduce variability - Smaller work items - Remove blockers - Limit WIP Individual vs Team Metrics Dangerous: - Developer X completed 3 tasks - Developer Y completed 7 tasks - Developer X is slacking Reality: - X did complex infrastructure - Y did simple bug fixes - X unblocked entire team - Y's fixes may have created bugs Team metrics safe: - Team throughput - Team cycle time - Team blockers Individual metrics dangerous. Context matters.

Progress Reports Weekly status (auto-generated): - Tasks completed: 14 - Cycle time avg: 3.8 days - Blockers resolved: 5 - Sprint progress: 67% - Forecast: On track for sprint goal No manual report writing. Data speaks.

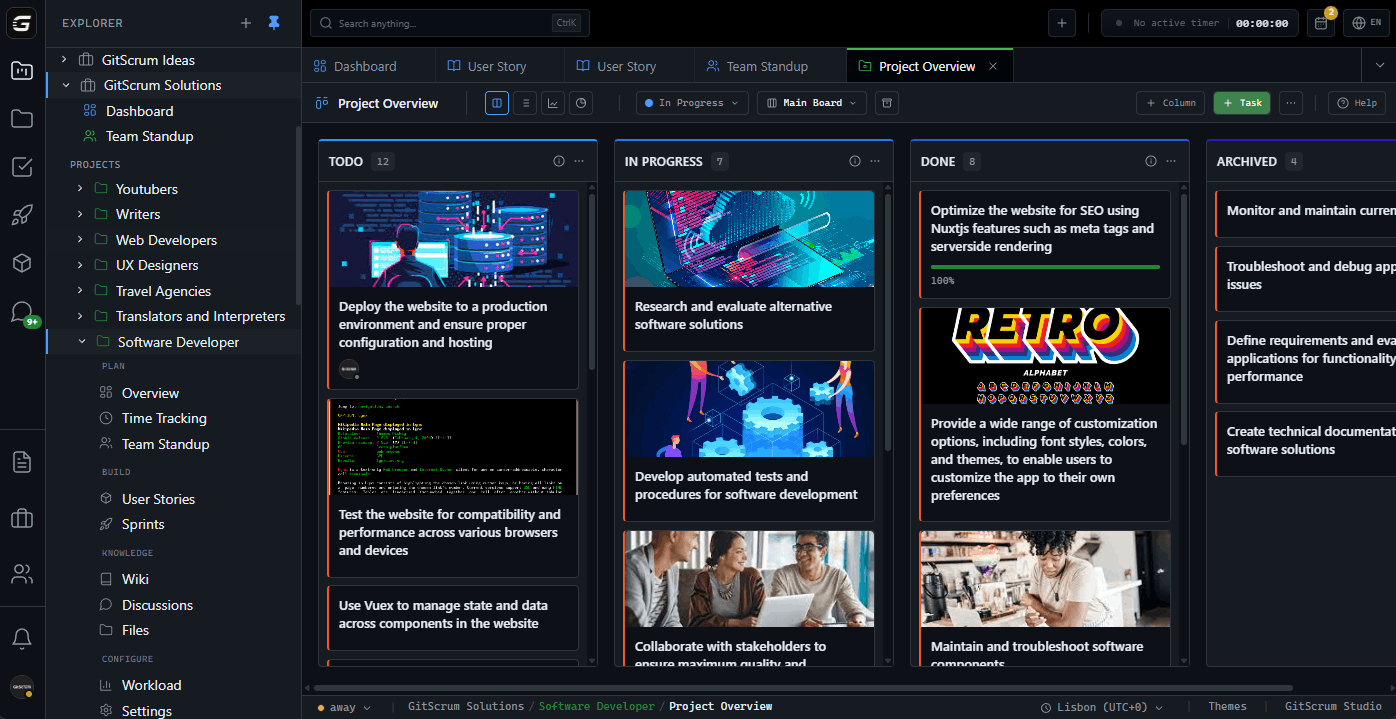

Portfolio View Multiple projects: - Project A: Healthy (green) - Throughput: stable - Cycle time: improving - No blockers - Project B: At risk (yellow) - Throughput: declining - Cycle time: increasing - 3 blockers > 5 days - Project C: Critical (red) - Throughput: stopped - Cycle time: N/A - Blocked on dependency Management view. No surprises.

Retrospective Data Retro with data: - 'What slowed us down?' - Data: Review wait time increased 40% - 'Why?' - Data: PR size increased to 300 lines avg - Action: PR size limit 100 lines Not: 'I feel like reviews are slow' But: 'Review time is 2.1 days, up from 1.2 last month' Data-informed improvement. Stakeholder Dashboard Executive view: - Project: On track (85% confidence) - Next milestone: Feb 15 - Risks: 1 dependency unresolved - Health: Green They see status.

You see details. Same data, different views.

Trend Analysis Last 6 months: - Cycle time: 6.2 -> 4.1 days (34% improvement) - Throughput: 10 -> 14 items/week (40% improvement) - Blockers: 8 -> 3 per sprint (62% reduction) Process improvements working. Data proves it.

Not Surveillance What we track: - How long work takes (process) - Where work gets stuck (bottleneck) - How much work flows (throughput) - What blocks progress (impediments) What we DON'T track: - Keystrokes - Mouse movements - Screen captures - Hours at desk - Git commits per person Process metrics, not people surveillance. Trust preserved.

Getting Started 1. Sign up GitScrum ($8.90/user, 2 free) 2.

Work naturally (no behavior change) 3. Metrics collect automatically 4.

Review weekly dashboard 5. Identify one bottleneck 6.

Take action 7. Measure improvement Data-driven improvement.

Without surveillance. Without micromanagement.

The GitScrum Advantage

One unified platform to eliminate context switching and recover productive hours.