Organizations cannot optimize what they cannot measure, and they cannot measure what they cannot compare.

When each team uses different tools with different metrics, performance comparison becomes meaningless. Consider story points.

Team Alpha estimates aggressively—their '5' is another team's '13'. Team Beta padding estimates conservatively—their '8' represents less work than Team Alpha's '3'.

Team Gamma does not use points at all—they track hours. Comparing these teams by 'points delivered' or 'velocity' produces nonsense.

A team delivering 50 points might be outperforming a team delivering 100 points, but the numbers suggest the opposite. Beyond estimation practices, different tools measure different things.

One team's 'cycle time' is calculated from task creation. Another's starts from first commit.

A third measures from when the task enters 'In Progress.' These are all valid measurements, but they measure different things. Comparing them creates false impressions of relative performance.

Leadership ends up making decisions based on incomparable metrics. Resource allocation, team sizing, project assignments—all influenced by numbers that cannot be meaningfully compared.

The illusion of data-driven management hides the reality of guess-driven decisions. A unified platform ensures consistent measurement across teams.

Same tools, same definitions, same calculations. When Team Alpha delivers 50 points and Team Beta delivers 40, that comparison means something because both teams measure the same way.

Leadership can actually make informed decisions.

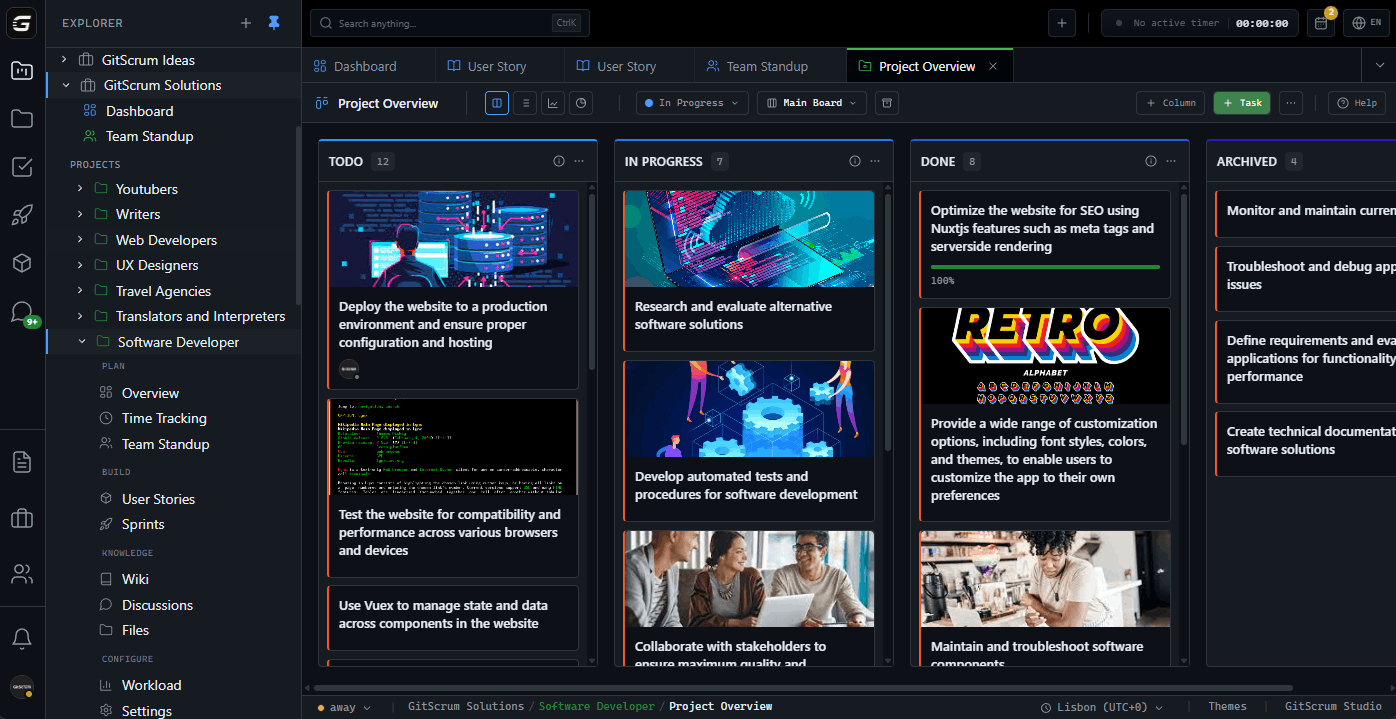

The GitScrum Advantage

One unified platform to eliminate context switching and recover productive hours.