ML Work Is Different Typical ML workflow: ├─ Explore data (1-2 weeks) ├─ Build features (2-4 weeks) ├─ Train models (days to weeks) ├─ Run experiments (100+ variations) ├─ Evaluate results (ongoing) ├─ Deploy winner (finally!

Not predictable. 90% of experiments fail.

That's expected, not a problem. Why Traditional PM Fails ML Sprint planning for ML: ├─ 'Improve model accuracy' - how big is this?

├─ Story points for research? Meaningless ├─ 2-week sprints when experiments take 3?

├─ 'Done' for something that needs retraining? ├─ Stakeholder: 'Is it done yet?' after month 2 Result: Teams stop using PM tools.

Work happens in notebooks. Stakeholders have no visibility.

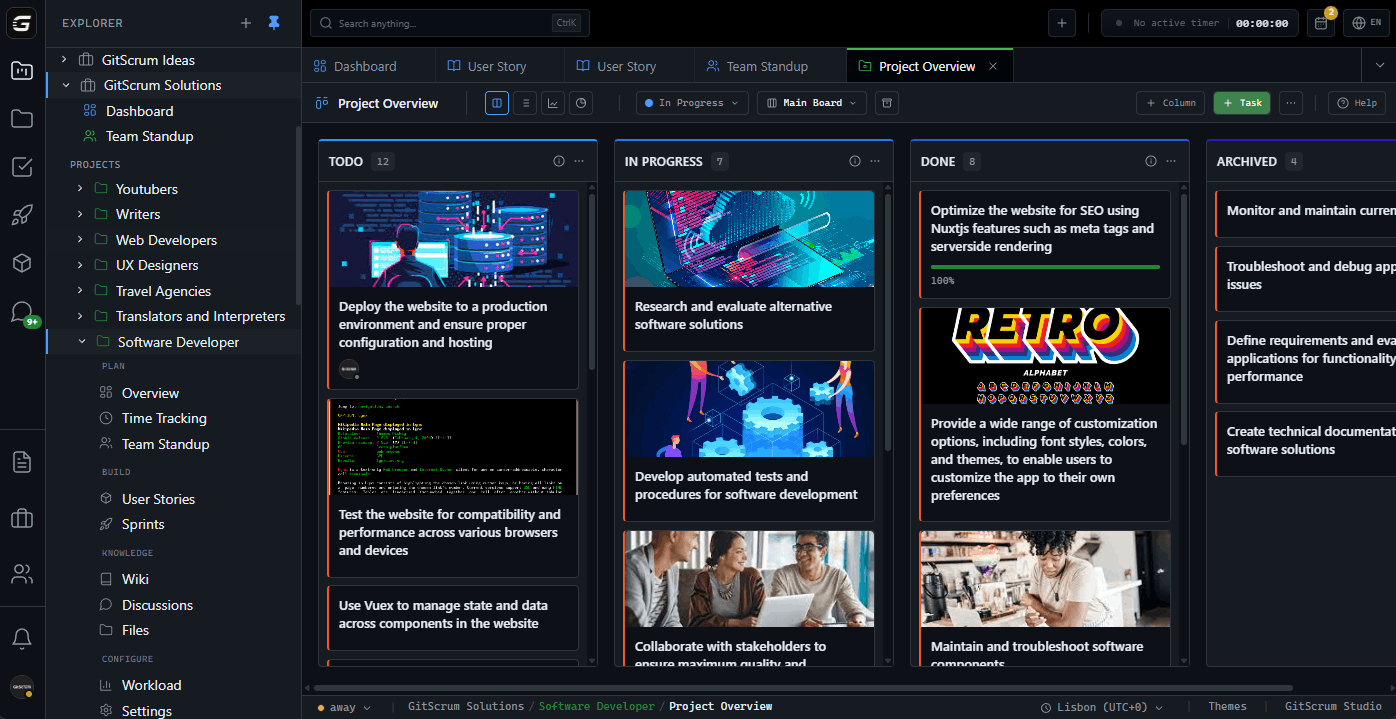

The Real ML Tracking Needs What ML teams actually track: ├─ What experiments ran ├─ What metrics improved ├─ What made it to production ├─ What's blocking production ├─ Data pipeline status ├─ Model drift monitoring ├─ Research vs engineering time Not: how many story points completed. GitScrum for ML Teams Adapting to ML reality: ├─ Research tracks (flexible scope) ├─ Production tracks (fixed scope) ├─ Experiment linking via Git ├─ Milestone = model version ├─ Wiki for research documentation Flexible enough for research.

Structured enough for production. Research vs Production Workflow Research phase: ├─ Timeboxed exploration (not story-pointed) ├─ 'Investigate X' tasks ├─ Expected outcome: Decision (not feature) ├─ Link to notebooks via Git ├─ Document findings in wiki Production phase: ├─ Traditional sprint tracking ├─ 'Deploy model v2' = clear deliverable ├─ Story points meaningful ├─ Git-linked to model repo ├─ CI/CD integration Two workflows.

One tool. Experiment Tracking Integration ML experiment trackers: ├─ MLflow ├─ Weights & Biases ├─ Neptune ├─ DVC ├─ Comet GitScrum approach: ├─ Experiments live in tracker ├─ Tasks link to experiment repos ├─ 'Best experiment' referenced in task ├─ Deploy task links to model Git ├─ Not replacing MLflow, complementing it Track the project, not the experiments.

Data Pipeline Visibility Data work often invisible: ├─ Data collection (weeks) ├─ Data cleaning (weeks) ├─ Feature engineering (ongoing) ├─ Pipeline reliability (critical) GitScrum tracking: ├─ Data tasks = first-class citizens ├─ Pipeline repos linked ├─ Data quality blockers visible ├─ 'Data ready' = real milestone No more 'waiting on data' black hole. Model Lifecycle Tracking Model lifecycle: ├─ v1.0 - Baseline (deployed) ├─ v1.1 - Improved features (deployed) ├─ v1.2 - Architecture change (experiment) ├─ v2.0 - New approach (research) Tracking approach: ├─ Sprint per model version ├─ Research tasks = timeboxed ├─ Deploy tasks = traditional ├─ Model repo = sprint anchor ├─ What's in prod is clear Stakeholders see production progress, not research churn.

Cross-Functional ML Teams ML team composition: ├─ ML Engineers (modeling) ├─ Data Engineers (pipelines) ├─ MLOps/Platform (deployment) ├─ Product (requirements) ├─ Sometimes: Research Scientists GitScrum supports: ├─ Different work types, one board ├─ Filter by role/work type ├─ Dependencies between tracks ├─ Blockers visible across functions ├─ Same visibility for all No separate tools per function. Stakeholder Communication ML stakeholder challenge: ├─ 'When will it be ready?' ├─ 'Why is it taking so long?' ├─ 'What's the progress?' ├─ 'Is 80% accuracy good?' GitScrum helps: ├─ Clear production milestones ├─ Research phase = timeboxed ├─ Progress = models deployed ├─ Metrics in task descriptions ├─ Wiki explains ML concepts Stakeholders see what matters: what's in production, what's coming.

Failed Experiments Are Progress ML reality: ├─ Experiment 1: Didn't beat baseline ├─ Experiment 2: Overfit ├─ Experiment 3: Too slow for inference ├─ Experiment 4: Promising! ├─ Experiment 5: Winner!

Traditional PM: 4 'failures' ML reality: 4 learnings + 1 success GitScrum approach: ├─ Research tasks = timeboxed investigation ├─ Outcome = decision, not always 'ship' ├─ 'Decided not to pursue' is valid ├─ Document learnings in wiki ├─ No fake 'completion' pressure Honest tracking, not gaming metrics. Notebook-to-Production Pipeline Typical path: ├─ Jupyter notebook (exploration) ├─ Python scripts (refactoring) ├─ Model repo (production code) ├─ CI/CD pipeline (deployment) ├─ Monitoring (post-deploy) GitScrum tracking: ├─ Exploration task → notebook commits ├─ Refactoring task → script commits ├─ Production task → model repo ├─ Deploy task → CI/CD runs ├─ Each phase Git-linked See code journey from notebook to prod.

ML-Specific Metrics What ML teams measure: ├─ Model accuracy/metrics (in MLflow) ├─ Inference latency (in monitoring) ├─ Experiment velocity (in tracker) ├─ Production deployment frequency (project metric) ├─ Research-to-production time (project metric) GitScrum tracks the project metrics. MLflow tracks the model metrics.

Both needed, different purposes. Pricing for ML Teams Small ML team (3 engineers): ├─ $8.90/month (1 paid user) ├─ All features included ├─ Git integration for model repos Mid-size (10 engineers): ├─ $71.20/month ├─ Multi-repo support ├─ Research + production tracks Large ML org (30 engineers): ├─ $249.20/month ├─ Same features as small team ├─ Multiple project support $8.90/user/month.

2 users free forever. Compared to General PM Tools Jira for ML: ├─ Designed for software sprints ├─ Story points meaningless for research ├─ No concept of experiments ├─ Overhead kills research velocity Asana/Monday: ├─ Marketing-oriented ├─ No Git integration ├─ No technical workflow support ├─ Wrong abstraction entirely GitScrum: ├─ Git-native (model repos) ├─ Flexible for research ├─ Structured for production ├─ No forced sprint methodology Real ML Team Experience 'We tried Jira.

Data scientists hated it. We tried Notion.

No Git integration. We tried nothing - chaos.

GitScrum hits the sweet spot: enough structure for stakeholder visibility, enough flexibility for research reality. The Git integration means model deployments actually show on the board.' - ML Engineering Lead, Series B Startup Day-to-Day Workflow Weekly planning (not daily standup): ├─ Research track: What are we investigating?

├─ Production track: What ships this week? ├─ Blockers: Data quality?

├─ Timeboxes: When do we decide on research? Async updates: ├─ Commit messages = status update ├─ Board reflects Git activity ├─ No daily status meetings ├─ Deep work protected Monthly stakeholder review: ├─ What deployed (from board) ├─ What we learned (from wiki) ├─ What's next (from backlog) MLOps Integration MLOps workflow: ├─ Model training triggers ├─ CI/CD for model deployment ├─ Model registry updates ├─ A/B test launches ├─ Monitoring alerts GitScrum role: ├─ Deploy tasks link to CI/CD ├─ A/B test tasks track experiments ├─ Alert tasks for drift issues ├─ Registry version = task completion Not replacing MLOps tools.

Tracking the human workflow. Start Free Today 1.

Sign up (30 seconds) 2. Connect model repo (GitHub/GitLab/Bitbucket) 3.

Create research + production tracks 4. Ship models, not status updates ML-aware project management.

The GitScrum Advantage

One unified platform to eliminate context switching and recover productive hours.