The retrospective generated five action items.

The team implemented three of them. Did they help?

Nobody knows. There's no before-and-after data.

No measurement of whether cycle time improved. No tracking of whether defect rates dropped.

Just a vague sense that 'things seem better.' Maybe they are. Maybe the team just got used to the problems.

Maybe the improvement came from something unrelated to the retrospective actions—a new hire, a simpler project, external factors. Without data, you can't distinguish real improvement from recency bias or survivorship effects.

You can't identify which changes worked and which were theater. You can't make evidence-based decisions about process.

The team keeps doing retrospectives, keeps generating action items, but has no feedback loop to know if any of it matters.

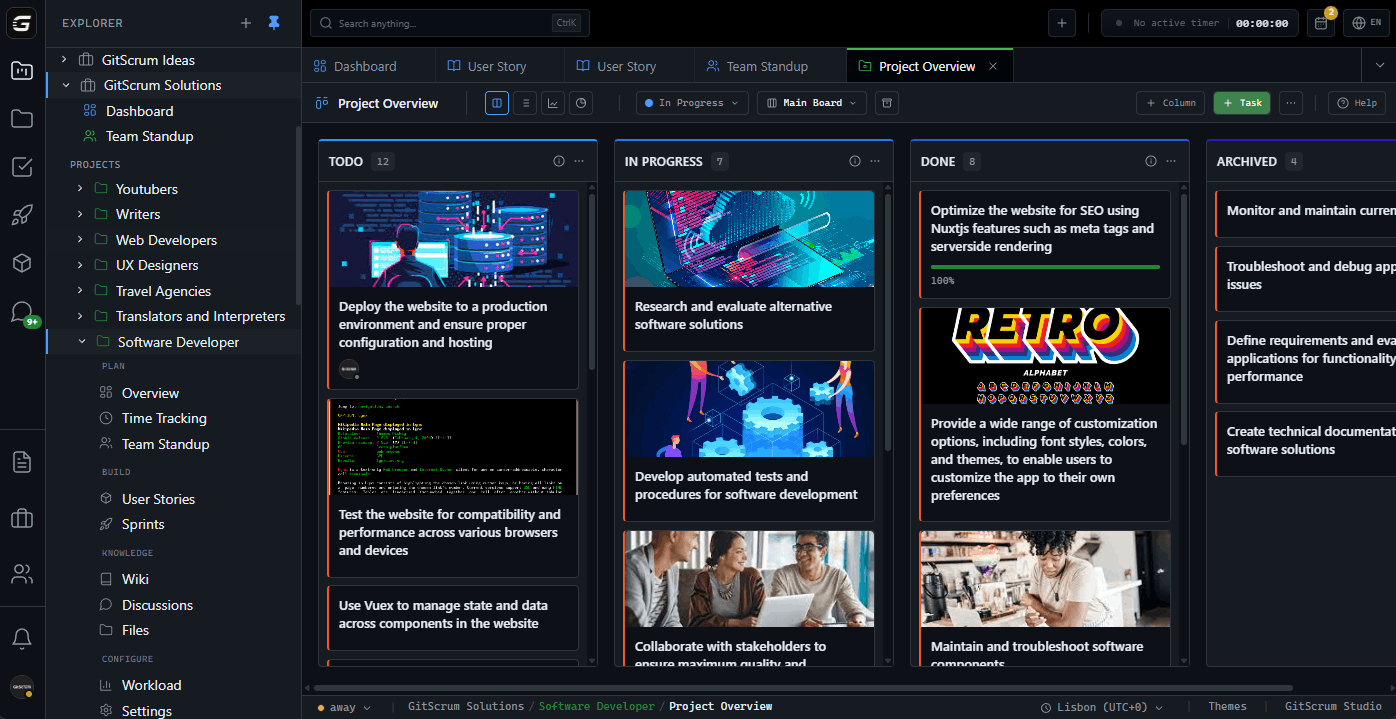

The GitScrum Advantage

One unified platform to eliminate context switching and recover productive hours.