Data Engineering Is Invisible Work Data engineering reality: ├─ ETL pipelines (extract, transform, load) ├─ Data warehouse design ├─ dbt models and transformations ├─ Airflow/Dagster DAG development ├─ Data quality monitoring ├─ Feature engineering for ML ├─ Analytics table maintenance ├─ Infrastructure scaling ├─ Schema migrations ├─ Data governance compliance When it works, nobody notices.

When it breaks, everyone notices. Why Traditional PM Fails Data Teams 'Build feature X': ├─ Product team sees: Button on page ├─ Data team sees: │ ├─ Raw data extraction │ ├─ Transformation logic │ ├─ Quality validation │ ├─ Dimensional modeling │ ├─ Aggregation tables │ ├─ Incremental loading │ ├─ Backfill historical data │ ├─ Documentation One task becomes ten.

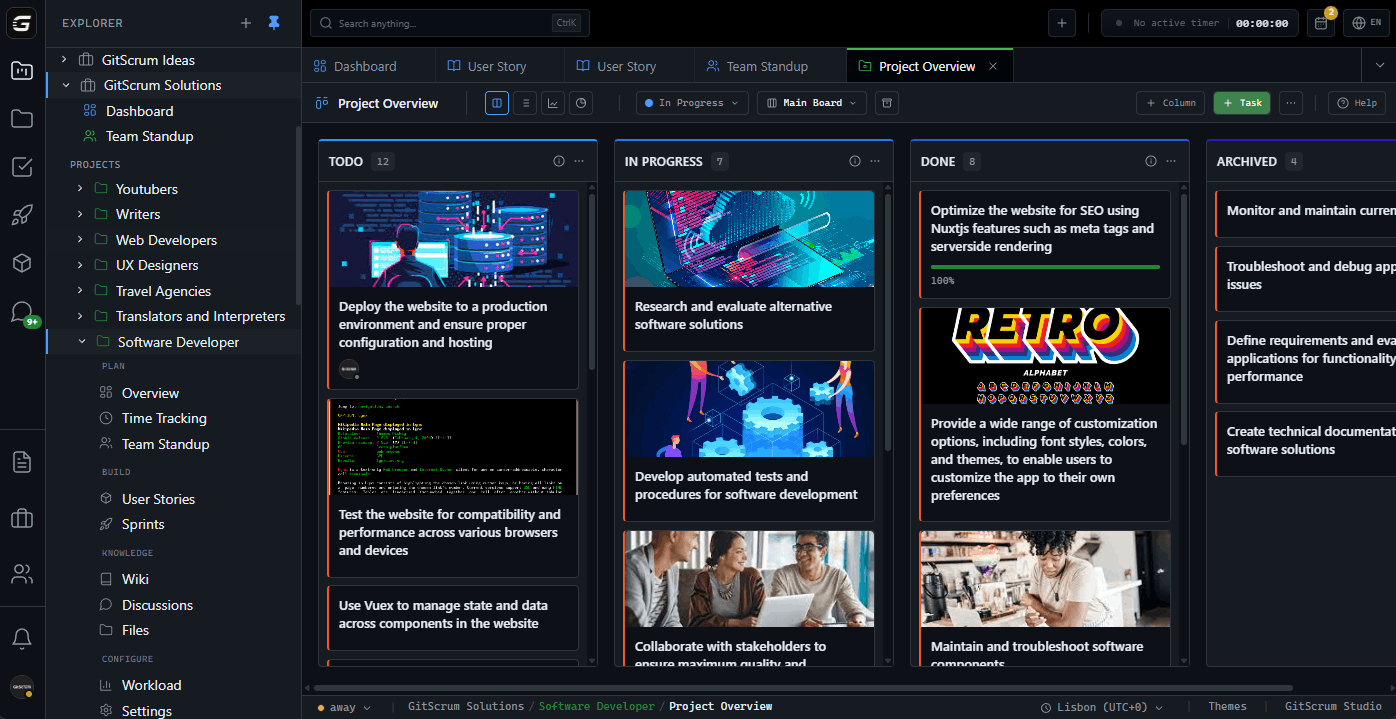

Traditional PM: 'Data team is slow.' GitScrum for Data Engineering Data-aware tracking: ├─ Pipeline tasks with dependencies ├─ dbt model tasks with schema ├─ Data quality gates ├─ SLA tracking visibility ├─ Git-linked to data repos ├─ Infrastructure work visible Show the iceberg below the surface. Pipeline Development Tracking Pipeline lifecycle: ├─ Requirements (what data needed) ├─ Source analysis (raw data exploration) ├─ Schema design (target structure) ├─ Transformation logic (dbt/Spark) ├─ Quality tests (data validation) ├─ Orchestration (Airflow DAG) ├─ Monitoring (alerts, SLAs) ├─ Documentation (lineage, usage) GitScrum approach: ├─ Pipeline epic with phases ├─ Task per phase ├─ Checklists for requirements ├─ Git commits link to tasks ├─ 'Pipeline: sourceusers → 7/9 phases' dbt Project Management dbt workflow: ├─ models/ directory ├─ Staging models ├─ Intermediate models ├─ Mart models (business logic) ├─ Tests (not null, unique, etc.) ├─ Documentation (schema.yml) ├─ CI/CD (dbt Cloud or custom) GitScrum dbt tracking: ├─ Model tasks linked to Git ├─ 'Add dimcustomers model' ├─ Commit links to model file ├─ Test tasks as subtasks ├─ Schema changes visible ├─ Wiki has dbt standards Airflow DAG Development Orchestration tasks: ├─ DAG design (task dependencies) ├─ Operator implementation ├─ Sensor configuration ├─ Connection setup ├─ Variable management ├─ Testing (local + staging) ├─ Production deployment ├─ Monitoring configuration GitScrum tracking: ├─ DAG task with checklist ├─ [x] Operators defined ├─ [x] Sensors configured ├─ [x] Connections set up ├─ [ ] Staging test pass ├─ [ ] Production deployed ├─ Git commits link to DAG files Data Quality Gates Quality workflow: ├─ Schema validation ├─ Row count checks ├─ Null value thresholds ├─ Uniqueness constraints ├─ Referential integrity ├─ Business rule validation ├─ Freshness checks ├─ Anomaly detection GitScrum approach: ├─ Quality task per pipeline ├─ Checklist = quality checks ├─ [x] Not null: userid ├─ [x] Unique: transactionid ├─ [ ] Freshness < 1 hour ├─ Pipeline blocked until green SLA Tracking Visibility SLA reality: ├─ 'Daily report by 7 AM' ├─ 'Real-time dashboard < 5 min lag' ├─ 'ML features updated hourly' ├─ Stakeholders don't see the work ├─ They see: late or not late GitScrum SLA tasks: ├─ SLA task per commitment ├─ Description: requirements ├─ Linked to pipeline tasks ├─ SLA breach = P0 task ├─ Historical tracking for patterns Make reliability work visible.

Data Warehouse Migration Migration project: ├─ Schema analysis ├─ Table-by-table migration plan ├─ Transformation updates ├─ Parallel running period ├─ Validation per table ├─ Cutover coordination ├─ Rollback plan GitScrum migration tracking: ├─ Migration epic ├─ Task per table/schema ├─ Checklist: migrated, validated, cutover ├─ Dependency graph visible ├─ Rollback docs in wiki Feature Store Development ML feature engineering: ├─ Feature definition ├─ Transformation logic ├─ Online vs offline stores ├─ Backfill historical features ├─ Documentation for ML team ├─ Monitoring feature drift GitScrum approach: ├─ Feature task per feature set ├─ Link to ML team's model tasks ├─ 'userpurchasehistory feature' ├─ Backfill as separate task ├─ Wiki documents feature catalog Schema Change Management Schema evolution: ├─ New column request ├─ Impact analysis ├─ Migration script ├─ Backfill (if needed) ├─ Downstream updates ├─ Documentation update ├─ Communication to consumers GitScrum tracking: ├─ Schema change task ├─ Linked to requestor's feature task ├─ Checklist: analysis, migration, backfill ├─ Consumer notification task ├─ Git commit to migration scripts Cross-Team Dependencies Data team serves: ├─ Product team (dashboards) ├─ ML team (features) ├─ Finance team (reports) ├─ Marketing team (analytics) ├─ Operations team (monitoring) GitScrum coordination: ├─ Request tasks from other teams ├─ Priority visible across teams ├─ Dependencies linked ├─ 'Blocked: waiting for ML feature spec' ├─ No hidden queues Infrastructure Work Tracking Infra tasks: ├─ Cluster scaling ├─ Cost optimization ├─ Performance tuning ├─ Security updates ├─ Upgrade planning ├─ Disaster recovery testing GitScrum approach: ├─ Infrastructure epic ├─ Tasks with priority ├─ 'Upgrade Spark 3.4 → 3.5' ├─ Linked to performance issues ├─ Wiki documents architecture Pricing for Data Teams Solo data engineer: $0 (free) 2-person team: $0 (free) 5-person team: $26.70/month 10-person team: $71.20/month 20-person data org: $160.20/month $8.90/user/month. 2 users free forever.

No data engineering tier. No per-pipeline pricing.

All features included. Toolchain Integration Data stack: ├─ dbt (transformation) ├─ Airflow/Dagster (orchestration) ├─ Spark (processing) ├─ Snowflake/BigQuery (warehouse) ├─ Fivetran/Airbyte (ingestion) ├─ Great Expectations (quality) ├─ DataHub/Atlan (catalog) GitScrum fits: ├─ Git repos (dbt, Airflow DAGs) ├─ Task tracking (project management) ├─ Wiki (documentation) ├─ Doesn't replace data tools ├─ Complements them Documentation in Wiki Data documentation: ├─ Pipeline architecture ├─ Data dictionary ├─ dbt model documentation ├─ SLA commitments ├─ Runbooks (incident response) ├─ Onboarding guides GitScrum wiki: ├─ All docs in one place ├─ Linked from tasks ├─ Searchable ├─ Not in random Confluence pages ├─ Not lost in Slack Real Data Team Experience 'Data engineering is invisible until it breaks.

Our stakeholders thought we "just ran some queries". Now they see: 47 pipeline tasks, 12 quality gates, 8 SLA commitments.

They understand why "add one column" takes a week. The Git integration means our dbt commits show up in tasks automatically.

Finally, visibility for invisible work.' - Data Engineering Lead, Series B Startup Daily Workflow Morning: ├─ Check pipeline status (Airflow/Dagster) ├─ Check board: Any P0 issues? ├─ Update tasks: Progress on current work ├─ Review Git: PRs to review Development: ├─ Pick task from board ├─ dbt/Spark development ├─ Git commits link automatically ├─ Update task checklist ├─ Code review via Git End of day: ├─ Task status updated ├─ Tomorrow's priorities clear ├─ Blockers visible ├─ 10 minutes, done Incident Response Pipeline failure: ├─ Alert received ├─ P0 task created ├─ Investigation documented ├─ Fix task linked ├─ Root cause analysis ├─ Prevention task created ├─ Post-mortem in wiki GitScrum tracking: ├─ Incident visible to stakeholders ├─ Progress tracked ├─ Fix commits linked ├─ Post-mortem accessible ├─ Pattern recognition over time Start Free Today 1.

Sign up (30 seconds) 2. Connect dbt/Airflow repos 3.

Create pipeline tasks 4. Make data work visible Data engineering, finally visible.

The GitScrum Advantage

One unified platform to eliminate context switching and recover productive hours.